Your AI is wrong. We prove it.

ADKYN tests LLM applications and AI agents for hallucinations, prompt vulnerabilities, and reliability failures. Deploy with confidence.

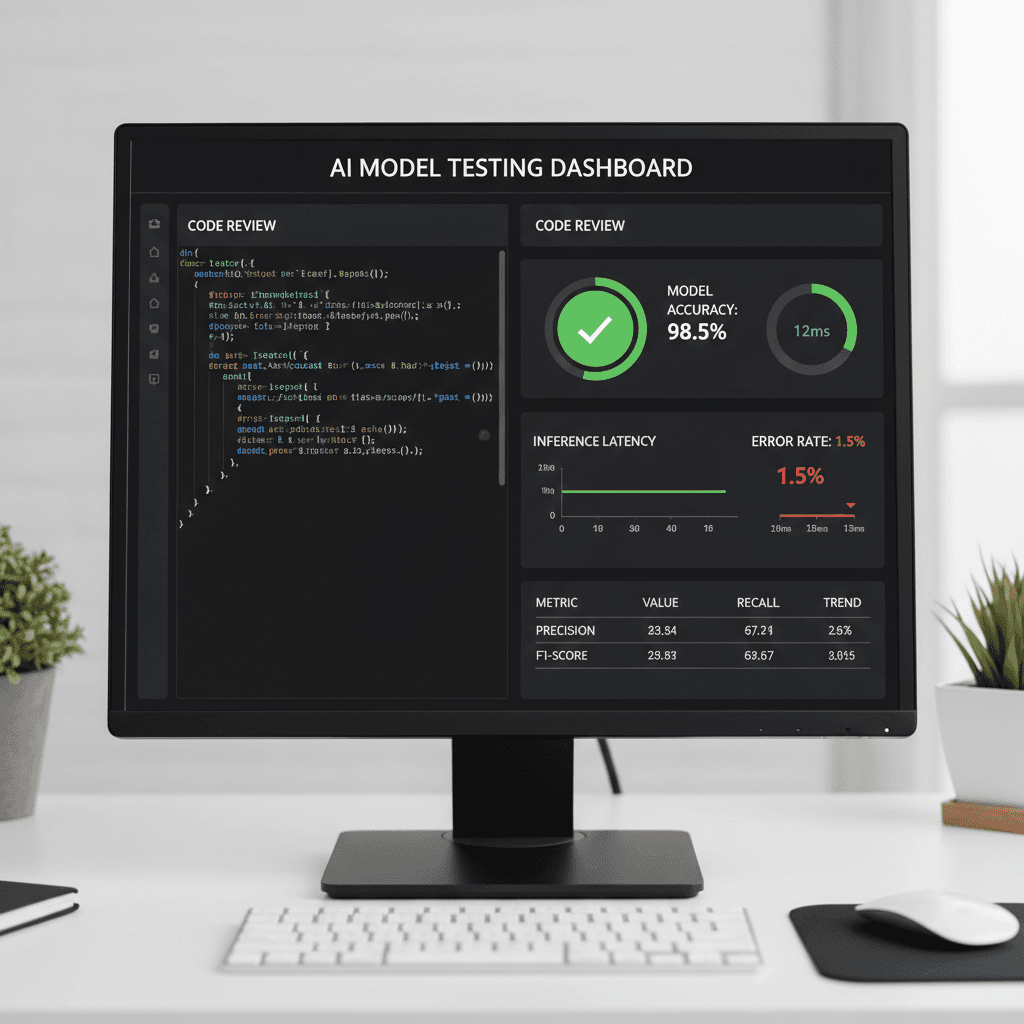

AI Testing and Reliability Evaluation

ADKYN identifies failure points in your AI systems before they reach users. We test for hallucinations, vulnerabilities, and edge cases that break performance.

LLM Testing

- hallucination detection - prompt robustness testing - adversarial inputs

Security Vulnerability Testing

- task completion reliability - tool-use validation - reasoning failure detection

Ongoing Monitoring Support

- automated test harness - regression testing - benchmark datasets

How it works

ADKYN evaluates your AI systems across critical dimensions to catch failures before they reach production.

Before your AI reaches users, test it.

Contact ADKYN today to schedule a reliability evaluation and ensure your LLM applications, AI agents, and generative AI products perform securely and accurately in production.